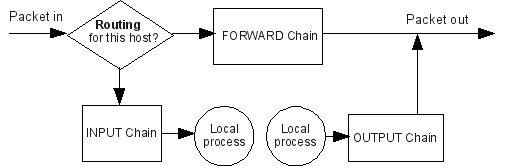

Suppose kube-proxy is provided with the cluster cidr 10.244.16.0/24, then the IPTABLES installed by IPVS proxier should be like what is shown below. If kube-proxy starts with -cluster-cidr=, IPVS proxier will masquerade off-cluster traffic accessing service Cluster IP, which behaves the same as what IPTABLES proxier. Specify cluster CIDR in kube-proxy startup MASQUERADE all - 0.0.0.0/0 0.0.0.0/0 / * kubernetes service traffic requiring SNAT */ mark match 0x4000/0x4000 KUBE-POSTROUTING all - 0.0.0.0/0 0.0.0.0/0 / * kubernetes postrouting rules */ Suppose kube-proxy has flag -masquerade-all=true specified, then the IPTABLES installed by IPVS proxier should be like what is shown below. If kube-proxy starts with -masquerade-all=true, IPVS proxier will masquerade all traffic accessing service Cluster IP, which behaves the same as what IPTABLES proxier. kube-proxy starts with -masquerade-all=true IPVS proxier will fall back on IPTABLES in the following scenarios.ġ. Nodeport type service UDP port with externalTrafficPolicy=local Nodeport type service TCP port with externalTrafficPolicy=localĪccept packages to nodeport service with externalTrafficPolicy=local Load balancer ingress IP + port + source CIDR Package filter for load balancer with loadBalancerSourceRanges specified Load balancer ingress IP + port with loadBalancerSourceRanges LB ingress IP + port with externalTrafficPolicy=localĪccept packages to load balancer with externalTrafficPolicy=local Masquerade for packages to load balancer type service Mark-Masq for cases that masquerade-all=true or clusterCIDR specified

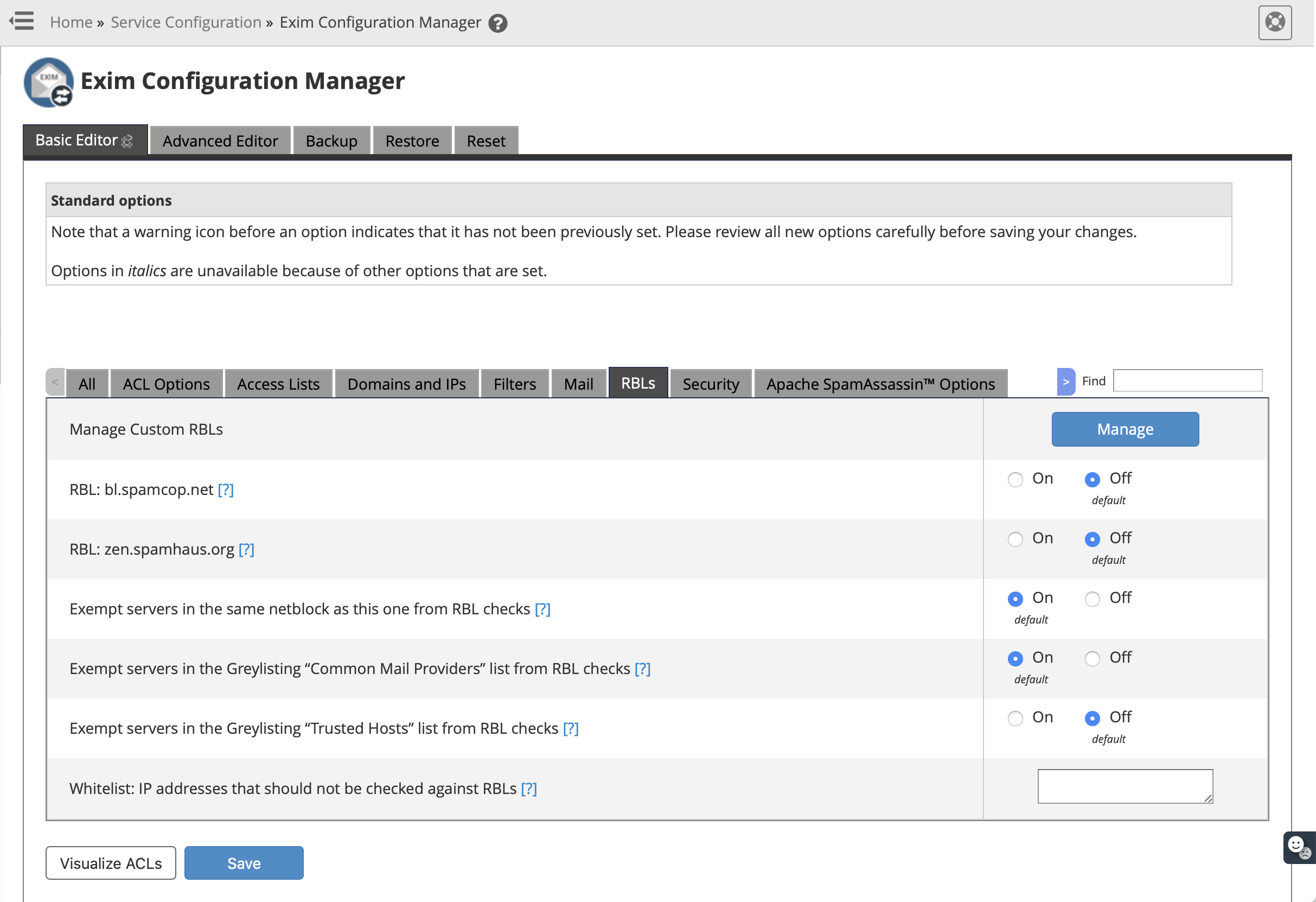

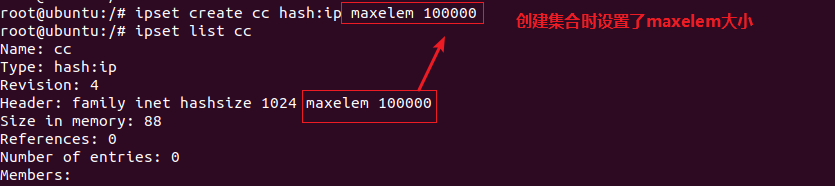

Here is the table of ipset sets that IPVS proxier used. Specifically, IPVS proxier will use ipset to store source or destination address of traffics that need DROP or do masquerade, to make sure the number of IPTABLES rules be constant, no matter how many services we have. IPVS proxier will employ IPTABLES in doing packet filtering, SNAT or masquerade. IPVS supports server health checking and connection retries, etc. IPVS supports more sophisticated load balancing algorithms than IPTABLES (least load, least connections, locality, weighted, etc.).

IPVS provides better scalability and performance for large clusters. Both IPVS and IPTABLES are based on netfilter.ĭifferences between IPVS mode and IPTABLES mode are as follows: IPTABLES mode was added in v1.1 and become the default operating mode since v1.2. IPVS mode was introduced in Kubernetes v1.8, goes beta in v1.9 and GA in v1.11. IPVS can direct requests for TCPĪnd UDP-based services to the real servers, and make services of real servers appear as virtual services on a single IP address. IPVS runs on a host and acts as a load balancer in front of a cluster of real servers. IPVS (IP Virtual Server) implements transport-layer load balancing, usually called Layer 4 LAN switching, as part of how to run kube-proxy in IPVS mode and info on debugging.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed